Optimization of Electrical Stimulation Patterns for Artificial Vision Implants

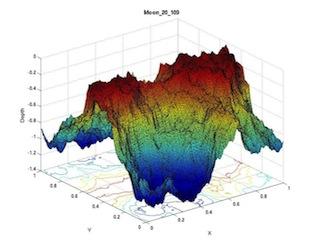

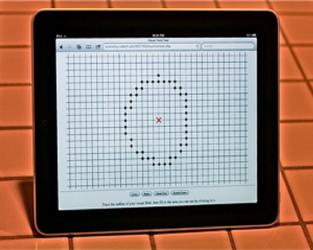

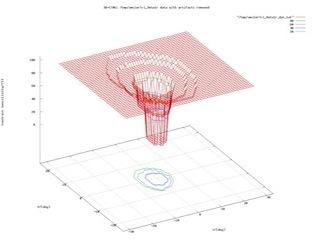

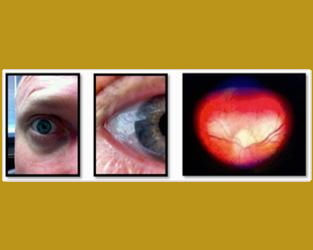

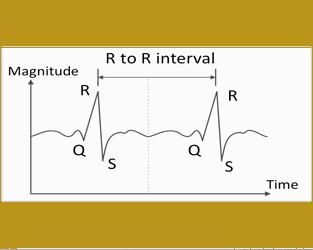

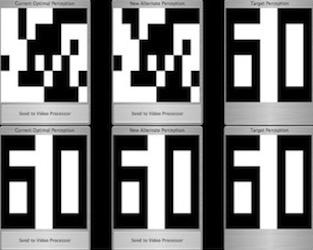

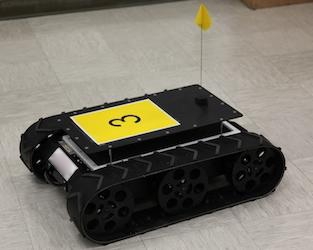

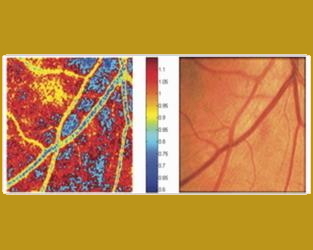

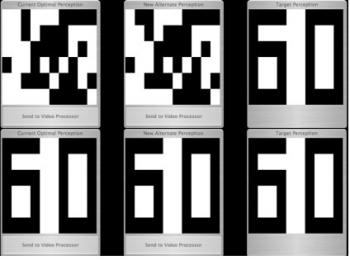

A great challenge exists in predicting how to electrically stimulate the retina of a blind subject via the Artificial Retina, a visual prosthesis, to elicit a desired visual perception. In epi-retinal stimulation, as is the case with the Artificial Retina, the electrical stimulus emission occurs at the ganglion cell layer, i.e., at the end of the retinal neural network structure and processing cascade, feeding directly into the optic nerve. Consequently, it is difficult to predict how to electrically stimulate the retina of a blind subject with a visual prosthesis to elicit a visual perception that matches an object or scene as captured by the camera system that drives the prosthesis. The electronic system must substitute for the information processing normally performed by retinal circuitry. This research was part of the collaborative U.S. Department of Energy-funded Artificial Retina Project designed to restore sight to the blind. To improve the visual experience provided by a visual prosthesis such as the Artificial Retina requires the efficient translation of the camera image stream, pre-processed by the Artificial Vision Support System (AVS2), into spatial-temporal patterns of electrical stimulation of retinal tissue by the implanted electrode array. The Visual and Autonomous Exploration Systems Research Laboratory at Caltech, under direction of Dr. Wolfgang Fink, directly addresses this challenge by developing and testing multivariate optimization algorithms. These algorithms directly involve the blind subjects for the evaluation of their visual perception. The optimization process helps define and optimize the electric stimulation patterns administered by the Artificial Retina to instill useful visual perceptions in blind subjects of objects or scenes that are viewed with the external camera system and pre-processed by AVS2. This novel approach for optimizing visual perception does not rely on assumptions regarding the residual processing capability or architecture of the affected retina, let alone on modeling the retina.

[Issued patent(s): U.S. 7,321,796 and U.S. 8,260,428]

Sponsor(s):